When Machines Earn and Spend: The Settlement Layer Nobody Built

The Agentic Economy Is Already Transacting. The Settlement Layer Is Not Ready.

CES 2026 did not simply showcase better hardware. It exposed a structural debt we have been postponing.

When Hyundai’s ATLAS humanoid moved across the floor without waiting for a human prompt — perceiving, evaluating, acting — it was no longer a demonstration of engineering capability. It was a proof of economic behavior.

The machine was not executing commands. It was making decisions with consequences.

That distinction matters more than it appears. Because the moment autonomous action carries economic weight, the infrastructure underneath it becomes the question.

The bottleneck is no longer the robot.

It is the settlement layer.

Why Human-Centric Money Breaks

Money, as we know it, was designed around human decision cycles.

It assumes a biological actor who can sign, authorize, and bear legal responsibility. It assumes latency is acceptable. It assumes that somewhere in the chain, a human is watching.

Autonomous agents violate every one of these assumptions simultaneously.

A machine executing thousands of micro-transactions per minute cannot pause for manual authorization. An AI agent procuring compute resources across jurisdictions has no legal signature to offer a bank. And when a fractional-cent transaction fails in a high-frequency loop, there is no human standing by to resolve it.

This is not a performance problem.

It is an architectural one.

The rails were never built for passengers that don’t have hands.

Where Existing Systems Reveal the Gap

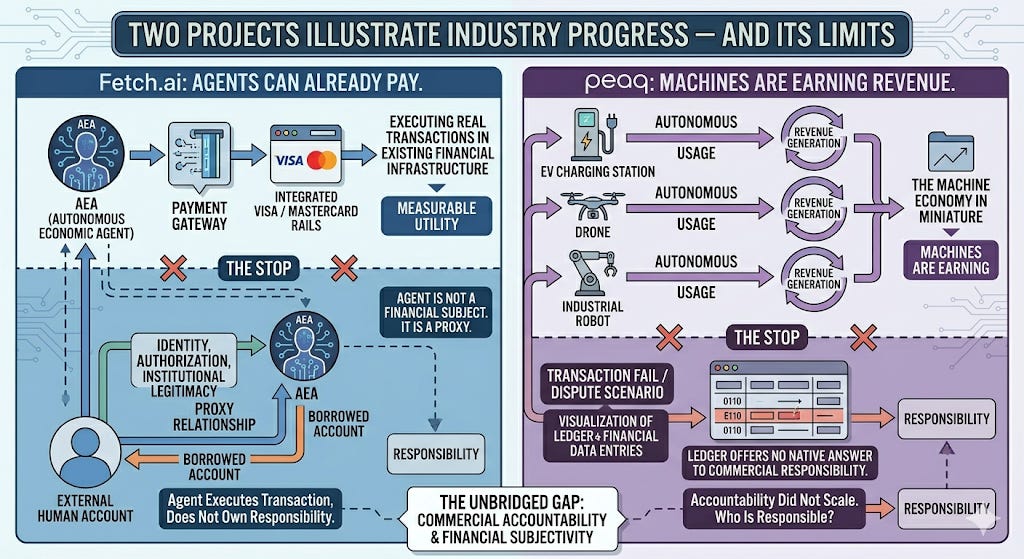

Two projects illustrate how far the industry has come — and exactly where it stops.

Fetch.ai demonstrated that agents can already pay.

By integrating with Visa and Mastercard rails, its Autonomous Economic Agents execute real transactions within existing financial infrastructure. This is meaningful. It replaces speculation with measurable utility.

But the agent is not a financial subject. It is a proxy. Identity, authorization, and institutional legitimacy all live outside the system — anchored to a human account the agent merely borrows from. The agent executes the transaction. It does not own the responsibility.

peaq moves in the opposite direction.

Here, machines are not merely paying — they are earning. EV charging stations, drones, and industrial robots generate revenue based on autonomous usage, with no human initiating each cycle. The machine economy, in miniature, already exists.

Yet when a transaction fails or a dispute arises, the ledger offers no native answer to the most basic question of commerce: who is responsible? Autonomy scaled. Accountability did not.

Across both models, the pattern is consistent. Execution works. Speed is sufficient. And the most essential component of legitimate commerce is structurally absent.

The Layer That Was Never Designed

Stablecoins optimized for execution, not legitimacy. Smart contracts enforce rules, not responsibility. Payments can verify funds, but not authorization.

This is not a bug in any particular system. It is a gap in how the entire category was conceived. The industry built faster rails without asking:

who — or what — should be permitted to ride them.

For settlement to function inside an agentic economy, it requires something none of these systems were designed to provide: a cryptographic link between autonomous execution and accountable human origin.

Not surveillance. Not manual override. A verifiable chain of provenance — so that every action an agent takes can be traced back to the human node that authorized, owns, and ultimately answers for it.

What InterLink Was Built to Address

Most systems in this space ask how to make agents faster, cheaper, or more autonomous. InterLink asks a prior question — and it is the only question that determines which systems survive:

who stands behind the agent?

Through its Human ID architecture and Mini-App Development Kit, InterLink binds agentic action to a verified human origin at the point of deployment.

An agent operating within this system does not float freely across the ledger. It carries provenance. Every settlement traces back to an accountable Human Node — not as an afterthought, but as a structural condition of participation.

This is not an alternative architecture. It is the layer the entire category assumed someone else would build.

The distinction matters: a system that accommodates agents retrofits identity onto an execution layer. A system designed with agency in mind treats identity as the condition on which execution is permitted.

InterLink was built on the second assumption. Most of the industry is still catching up to the first.

Stablecoins move value.

Ledgers decide validity.

InterLink provides the filter.

The Real Shift

ATLAS did not pause. It did not ask for authorization. It acted in ways indistinguishable from economic behavior — and the infrastructure underneath it was not ready.

The system that cannot assign responsibility cannot scale.

The signal from CES 2026 is unambiguous. Digital labor has arrived. And the machine economy will not be decided by who builds the fastest agent.

Speed scales execution.

Accountability selects survivors.

Automation is a technological milestone. Agency is an economic revolution — and revolutions require new foundations, not faster roads.

If you’d like to support my research, you may use my Interlink invitation code below. It also unlocks an instant mining boost ✚︎ Welcome Bonus for new users.

InterLink Referral Code: 905079415

Wallet Invitation Code : HSVZZPEJ (75% Commission Rebate)

❓ New to InterLink?

For a 🔗step-by-step guide, start with the pinned post at the top of my blog — Done.T Insight✨.

You don’t need capital. You just need five minutes.

📚 Done.T’s Related Insights

🔎 Search “Done.T Insight” on Google for real data & analysis.

Disclosure: This post contains referral links and reflects my personal research and experience. It is provided for informational purposes only and does not constitute financial advice.